Alright, so let’s begin again with some LiDAR processing in PostGIS. I really liked this discussion of multipoint conversions of LiDAR and other point datasets, but we won’t address making things more efficient here (yet). Besides, these aren’t just points– we do want to retain their attribution… .

Now, any regular database user will recognize my nubeness reading this post. If you are that person, please comment. I’m looking to learn… .

In the LiDAR dataset I have, there are elevations for all points, ground points, vegetation points, building points etc. I’d like to know about how tall my vegetation is by calculating the difference between the height of the vegetation point and the nearest ground point. So, I need to know what the nearest ground point it, and difference the two heights. Since I am interested in the nearest ground point that is as close to directly beneath my vegetation point as possible, we’ll compute nearest neighbor in two dimensions.

I’m going to, once again, take advantage of the resources at BostonGIS, and their Nearest Neighbor function. Install and use according to their directions… .

So, I’ve downloaded the state LiDAR dataset for an Ohio County, and want to do some processing on it. It does contain some fields in it’s original LAS form we might want to keep, specifically (looking at our statement to create the table:

CREATE TABLE lidar ( x numeric, y numeric, z numeric, intensity integer, tnumber integer, number integer, class integer, id integer, vnum integer, ) WITH (OIDS=FALSE); ALTER TABLE lidar OWNER TO postgres;

I convert from las to csv with the las2txt utility (as before):

#!/bin/bash x=0 for f in $( ls *.las); do x=`expr $x + 1` echo $x $f las2txt --parse xyzinrcpM -sep komma $f $f.csv done

I want to copy from CSV using local user permissions. It’s a little less efficient to do this than to have the database directly read from a CSV file, but it has the advantage that I don’t have to muck about giving my database super user file level permissions on my OS. With the following steps, I’m doing a sequential test of each step, so while the last step converted all my las files to CSV, I’m still exploring this process with a single file.

So, creating a script file called copy.txt with the following contents

\copy lidar from '/Users/blaa/Documents/GIS/Vinton/OSIP_VIN_LiDAR/s2035470.las.csv' with csv

We load a single block of LiDAR data in:

psql -U postgres -d Vinton -f copy.txt

(note, we could have used a pipe to accomplish this)

Now to add a geometry field, and populate that with the info in our x and y fields (remember, I don’t want these to be 3D points, but 2D points with a z attribute).

SELECT AddGeometryColumn('public','lidar','the_geom',91023236,'POINT', 2);

UPDATE lidar SET the_geom = ST_SetSRID(ST_MakePoint(x,y),91023236);

It’d be good to have a spatial index and primary key… .

CREATE INDEX lidar_the_geom_idx ON lidar USING gist(the_geom); ALTER TABLE lidar ADD PRIMARY KEY (vnum);

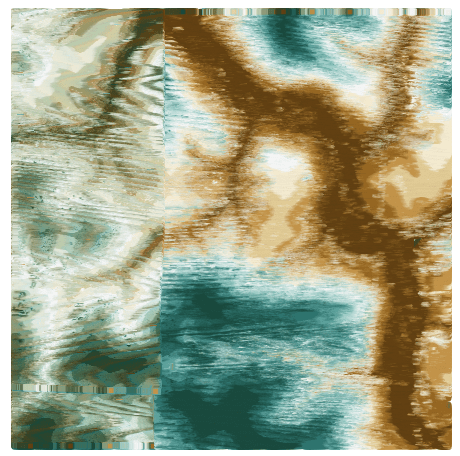

And view in uDig:

OK. Now, I now that class 1 is ground and class 5 is vegetation, so, to simplify future processing, I will split this out into two tables (although subselects or views might work just as well here… ).

CREATE TABLE lidar_veg AS SELECT * FROM lidar WHERE class = 5;CREATE TABLE lidar_ground AS SELECT * FROM lidar WHERE class = 1;

CREATE INDEX lidar_veg_the_geom_idx ON lidar USING gist(the_geom); CREATE INDEX lidar_ground_the_geom_idx ON lidar USING gist(the_geom); ALTER TABLE lidar_veg ADD PRIMARY KEY (vnum); ALTER TABLE lidar_ground ADD PRIMARY KEY (vnum);

The nearest neighbor function is “pgis_fn_nn”, and takes a few options. We’ll try this first with just one point. The vegetation point is in the middle of these 4. There are ground points all around:

SELECT pgis_fn_nn(b.the_geom, 20,1,4,'lidar_ground','true','vnum','the_geom') FROM lidar_veg AS b WHERE b.vnum = 576455; pgis_fn_nn ------------------ (576457,4.31045) (1 row)

Now, I need to capture that point, find out it’s z coordinate, and subtract that from the z coordinate of my vegetation point… . Not tonight… .

This was really helpful. I’ve been struggling to do something evidently simple and commonplace: take a csv file with numeric coordinate points, import to PostGIS, and then create a Geometry column and populate it with the lat-lons. Your description had the clearest explanation I’ve found on the Intertubes. Good luck with the Lidar!

Glad to hear it. What kind of system are you working on– a ‘Nix or a Windows system?

This seems like a very convoluted solution to a a fairly straightforward problem. The standard way to derive heights for the point cloud is to interpolate a DEM of the ground returns and then use a cursor to fetch the interpolated DEM value for each point. The one caveat is that different interpolation algorithms can produce very different results. I would recommend a Thin Plate Spline or TIN (do not use IDW). Your approach has the potential of adding a sever systematic bias.

Cheers,

Jeff

Jeffrey S. Evans, Ph.D | Senior Landscape Ecologist | The Nature Conservancy, North America Science | jeffrey_evans@tnc.org | Office (970) 484-9598 ext-114 Cell (970) 672-6766

I agree it’s a convoluted approach, especially given the ground point cloud has already been interpolated into a 2.5 foot posting DEM. That said, this is as much a thought experiment as anything, establishing the horizon for analyses in PostGIS. If WKTRaster were further along in development, that would be my ideal tool for doing this.

I did not clearly establish my reasoning for attempting this, but the question is “What can PostGIS do, and what are the limitations and methods for increasing efficiency of that process to a useable point?”– not what can be done in GIS. That’s well established.

All caveats aside, I’m not convinced there is any great potential for adding severe systematic bias. Systematic bias in the absence of an interpolator becomes less of a concern the more dense your point cloud. Here, the maximum distance to a nearest neighbor in areas not near a specular reflector (water) is about 5 feet. Interpolation, unless it’s a cliff or slump area is more a luxury than a necessity, so long as you don’t require an accuracy to your canopy height that exceeds a few feet, and I can’t think of any ecological applications that really need such accuracy. And in cliff or slump areas, a leaning tree over a gorge can severely bias your results, with or without an interpolator.

But, you raise good points, so in a future post, I’ll do some error analyses on this approach, well, once I get it working quickly enough… .

Your work sounds very interesting. What was the final size of your database and what kind of performance did you get for spatial queries?

Thanks

Size is relevant, but with the speed of queries seems to scale linearly with the size of the database, so the following link should give you some idea of speed:

https://smathermather.wordpress.com/2010/04/06/postgis-and-lidar-the-saga-completes/

Really, given there have been no earnest optimizations written to make PostGIS efficient at processing LiDAR datasets, with an index and clustering, analyses can be done quite efficiently on large datasets. I haven’t compared this to other systems, or raw analysis of better structured point datasets, but for nearest neighbor searches it works great. The larger the radius for the search, the slower, naturally, and this is where some of the new fast index scan functions would really shine.

To that end, I would recommend some of the new features available in PostGIS 1.5, e.g. ST_NearestPoint and related functions as more optimized solutions. I’ll do a shootout between these techniques sometime in the future… .

See also my latest post borrowing heavily from Nicklas Avéns blog.

https://smathermather.wordpress.com/2010/09/09/sqlmmore-acrobatics/

My apologies, I just found an error in this post. Ground points are class=2, not class=1. Class=1 is unclassified data.

Little update here, it’s now possible to use a raster in PostGIS (WKT Raster renamed to PostGIS Raster[1]) to get ground height. I don’t know if it’s faster than nearest neighbour, but for sure you can now use different interpolation functions. I’m actually using it on a large dataset (so I can’t say it’s fast, but it does the job).

[1] http://trac.osgeo.org/postgis/wiki/WKTRaster

@etiennebr,

I’ve been wondering if it wasn’t time to dive back into WKTRaster. Do you mind reporting how fast is not fast (hardware and points per second)?

I posted an performance analysis on postgis list http://old.nabble.com/PostGIS-raster-performance-analysis-td30923353.html

Interesting that the speed does not seem to be dependent on the number of points in the analysis. I have two questions on the two different approaches– once on performance, and the other on veracity. Would you be willing to share data and code so we can run the analysis both ways on the same hardware and OS and compare speed and accuracy? We could post the results here and on the PostGIS users group.

Excellent idea.

Nifty use of the return numbers and classifications,

How would you do your vegetation heights if you only had x,y,z coordinates? I deal with a lot of lidar data that does not have return numbers, classifications, etc.

Hmm, just XYZ, no brightness, eh? If you had brightness and return number, you could perform a classification, and I’d suggest you look to GRASS for this.

Brightness and return number aside, there is one solution that I have contemplated. You could perform a 3D concave hull calculation, and then calculate the difference between the top and bottom of the concave hull. It’s a non-trivial solution, but would give very good results. Unfortunately, I don’t know of any existing open source solution for this. PostGIS implements a 2D concave hull, but 3D tools are still a latent part of the project.

Alternatively, you can treat this as a sampling problem, and if you don’t mind degrading your data somewhat, check the difference between the minimum and maximum height of the points in a regular grid. It could result in some ugly data though, especially at the edges of features, like the edge a forest, or the edge of a hill. A way to refine it for the edges of your data would be to use the variance of your data to determine how much sampling to do, similar to jpeg compression. In other words, you start with a grid of a set size, say 10 meters between each cell. For this grid, calculate the min, max, and stdev (or some other measure of variance) of the elevation values. For areas beneath your threshold for stdev, you assign the ( max – min ) for the height value. For areas above your threshold, you increase your sampling and retest. This could work very well, and would replicate most of the advantages of a concave hull.