I hung out this week at the Boston Code Sprint http://wiki.osgeo.org/wiki/Boston_Code_Sprint_2013, which is a “C-Tribe” code sprint for improving things like PostGIS, MapServer, and other GeoFOSS projects written in C. See Paul Ramsey’s posts on PostGIS and MapServer for more on what everyone was working on.

Being my first time at a code sprint, it’s been very interesting, and it’s a very warm and inviting group. I will have some forthcoming posts on the work I did on skeletonization, and also work on getting it up on GitHub. Toward this end, Voronoi (yipee!) should land in PostGIS in the future via two venues. The first venue to provide Voronoi will be from the recently welcomed SFCGAL (optional) dependency additions to PostGIS coming down the pike from the Oslandia representatives, Olivier Courtin and Hugo Mercier, who were at the sprint. The second venue will be the addition of Voronoi from within GEOS as a port from JTS, since JTS already has it implemented. That may be a few months off, as it is being proposed as a Google Summer of Code (GSoC) project which Sandro Santilli agreed to manage (after hunting me down in IRC for my overly broad GEOS ticket).

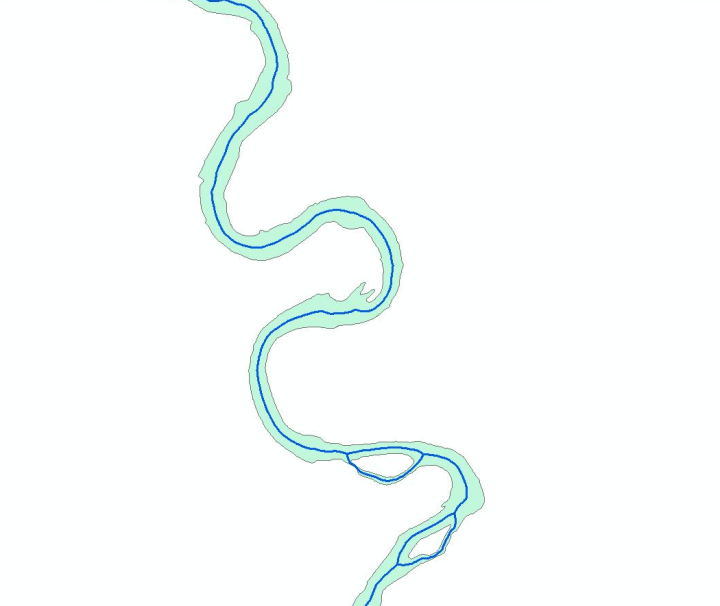

For my part, I cleaned up and worked on documenting my existing code base for routing skeletonization, played a bit with some additional filtering techniques appropriate to skeletonization, and ran some performance tests against the skeleton results that Olivier was producing from an ST_StraightSkeleton function leveraging the CGAL computational geometry library. It wasn’t a fair test, as my skeletonization was approximate, and Olivier’s exact, but it did give us the sense that ST_Voronoi from that codebase might be more performant for the skeletonization problem than exact skeletonization as performed by CGAL. As part of the skeletonization problem, I have been tasked by Paul Ramsey to write a C implementation of Dijkstra routing, to remove my pgRouting dependency from my skeletonization code, which I will start in on when I get a moment to breathe.

So, what will skeletonization look like when it’s complete? Nobody knows, but my projection is that we’ll have a few functions, dependent upon the kind of information you have about the geometry you want to skeletonize. So for example, if you had a stream network with known inputs and outputs, the algorithm would take advantage of that a priori knowledge and use it to clean up the final skeleton. Something like:

skeleton_geometry ST_Skeletonize(geometry polygon, geometry multipoint);

This is will create a nearly perfect skeleton for the cases where you have this a priori knowledge.

The tougher problem, of course, is when you have less knowledge about the skeleton. And if you want to encourage PostGIS developers to kibitz about analytical geometry, pose this as a problem– believe me that hours will be spent generating new algorithms for solving it. I arrived with 4 ideas on skeletonization (one of them the above) and left with an additional 3 or 4 great ones from conversations with Stephen Woodbridge, Regina and Leo, Pierre Racine, and Bborie Park (hopefully I haven’t forgotten any). But, regardless of which of these pan out, the idea is simple, instead of two input geometries, these will take an input polygon geometry and one or more tuning parameters. Cheers to Paul too for helping to limit the depth of this rabbit hole for me.

skeleton_geometry ST_Skeletonize(geometry polygon, value double, skeletonType text);

These solutions will likely not be as good as the routing solution above, but will be tunable to a posteriori results. The use case here might be scanned maps of road networks, contours or similar from which we want to extract linear features, or similar. Thus these approaches are an important addition to enhancing conversion from PostGIS Raster data type.