I chatted with Howard Butler (@howardbutler) today about a project he’s working on with Uday Verma (@udayverma @udaykverma) called Greyhound (https://github.com/hobu/greyhound) a pointcloud querying and streaming framework over websockets for the web and your native apps. It’s a really promising project, and I hope to kick the tires of it really soon.

The conversation inspired this post, which I’ve been meaning to do for a while summarizing Free and Open Source software for photogrammetrically derived point clouds. Why? Because storage. It’s so easy now to take so many pictures, use a structure from motion (SfM) approach to reconstruct camera positions and sparse point cloud, and then use that with a Multi-View Stereo approach to construct dense point clouds in order to… okay. Getting ahead of myself.

PDPCs (photogrammetrically derived point clouds). Hate the term but haven’t found a better one are 3D reconstructions of the world based on 2D pictures from multiple perspective. This is ViewMaster on steroids. Scratch that. This ViewMaster on blood transfusions and Tour de France level micro-doping. This is amazing technology FTW.

So, imagine taking a couple of thousand unreferenced tourist images used to reconstruct the original camera positions and a sparse cloud of colorized points representing the shell of the Colosseum:

Magical. This is step one. For this we use bundler:

Or maybe OpenMVG.

Ok, now that we know where all our cameras are at, and have a basic sense of the structure of the scene, we can go deeper and reconstruct dense point clouds from a multi-view stereo approach. But, let’s wait a second– this can be memory intensive, so first let us split our scene into chunks we can process in a way that we can put it all back together at the end. Enter Clustering Views for Multi-view Stereo (CMVS).

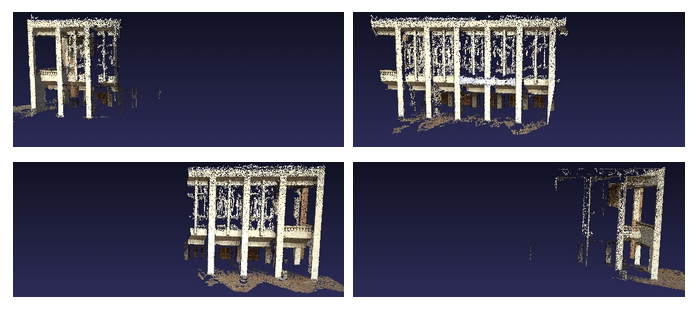

Honestly, the above image is the calculations from our final step, multi-view stereo (MVS), but broken into bite-size chunks for us in the previous step. Here we apply PMVS2:

Now, if you’re like me, you want binaries. We have many options here.

If you are willing to depart from the pure open source, VisualSFM is an option:

But, you’ll have to pay for commercial use. Same is true for CMPMVS:

http://ptak.felk.cvut.cz/sfmservice/websfm.pl?menu=cmpmvs

But it has the bonus of returning textured meshes, which is mighty handy. If you are a hobbiest UAS/UAV (Drone) person, this might be a good option. See FlightRiot’s directions for it here:

http://flightriot.com/post-processing-software/cmpmvs/

Me, I’m a bit of a FOSS purist. For this you can roll your own, or get binaries from the Python Photogrammetry Toolbox (the image is the link):

Finally, Mike James is working on some image level georeferencing for point clouds “coming soon”, so stay tuned for that:

PDPCs for the win!

Hi,

I would be happy to help you to make you use openVMG

Thanks, useful. Though the link to the PPT is broken, this seems to be the new one:

http://184.106.205.13/arcteam/ppt.php

Couldn’t figure out the URL that used their domain name, that’s a link from their page “OpArc” at

http://www.arc-team.com

I couldn’t find the real link either. Thanks for that!