LiDAR and photogrammetric point clouds

If we want to understand terrain, we have a pricey solution and an inexpensive solution. For a pricey and well-loved solution, LiDAR is the tool of choice. It is synoptic, active (and therefore usable day or night), increasingly affordable (but still quite expensive), and works around even thick and tall evergreen vegetation (check out Oregon’s LiDAR specifications as compared with US federal ones, and you’ll understand that sometimes you have to turn the LiDAR all the way up to 11 to see through vegetation).

For a comparably affordable solution, photogrammetrically derived point clouds and the resultant elevation models like the ones we get from OpenDroneMap are sometimes an acceptable compromise. Yes, they don’t work well around vegetation in thickets and forests, and other continuous vegetation covers, but with a few hundred dollar drone, a decent camera, and a bit of field time, you can quickly collect some pretty cool datasets.

As it turns out, sometimes we can collect really great elevation datasets derived from photogrammetry under just the right conditions. More about that in a moment: first let’s talk a little about the locale:

Sharon Conglomerate and Whipps Ledges, Hinckley Reservation

One of my favorite rock formations in Northeast Ohio is Sharon Conglomerate. A mix of sandstone and proper conglomerate, Sharon is a stone in NEO that provides wonderful plant and animal habitats, and not coincidentally provides a source of coldwater springs, streams, and cool wetland habitats across the region. A quick but good overview of the geology of this formation can be found here:

Mapping conglomerate

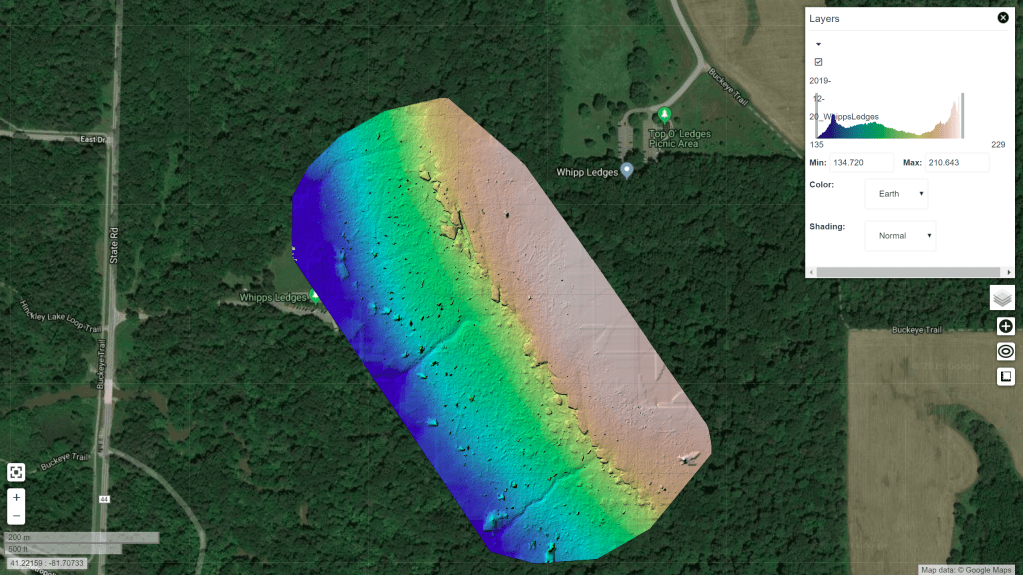

One of the conglomerate outcrops in Cleveland Metroparks is Whipps Ledges in Hinckley Reservation. It’s a favorite NEO climbing location, great habitat, and a beautiful place to explore. We wanted to map it with a little more fidelity, so we did a flight in August hoping to see and map the rock formations in their glorious detail:

Unfortunately, as my geology friends and colleagues like to joke, to map out the conglomerate, we need to “scrape away the pesky green vegetation stuff first”. We don’t want to do this, of course — this is a cool ecological place because it’s a cool geological place! It just happens to be a very well vegetated rocky outcrop. The maple, beech, oak and other trees there take full advantage of the lovely water source the conglomerate provides, so we can’t even glean the benefits of mapping over sparse and lean xeric oak communities: this is a lush and verdant locale.

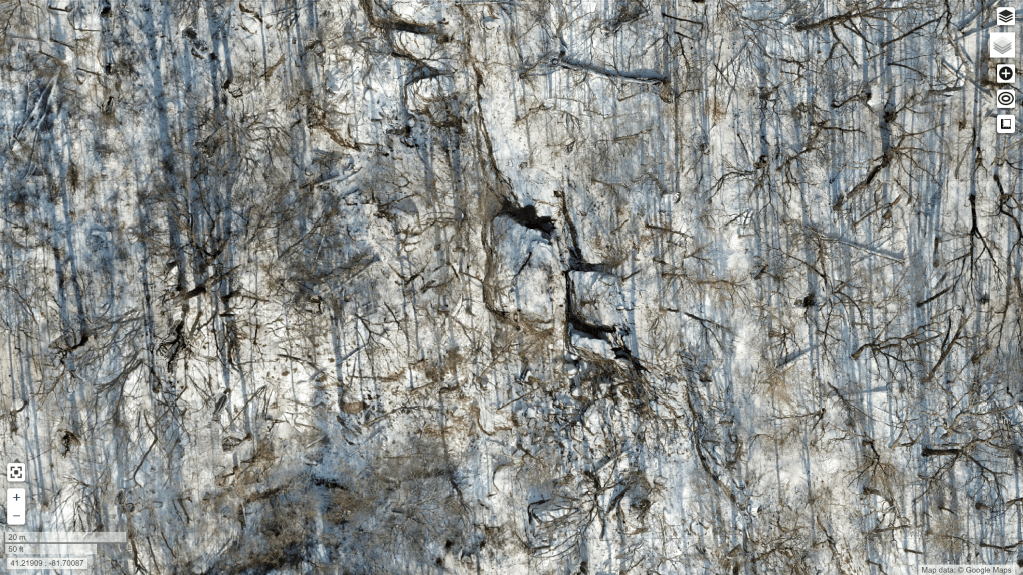

So yesterday, we flew Whipps Ledges again, but this time the leaves were off the trees. It can be a challenge still to get a good sense of the shape of the landform, even with leafless trees: forest floors do not provide good contrast with the trees above them, and it can be difficult to get good reconstructions of the terrain.

But yesterday, we were lucky: there was a thin layer of snow everywhere providing the needed contrast without being too thick to distort the height of the forest floor too much; shadows from the low sun created great textures on the featureless snow that could be used in matching.

The good, the bad, and the spectacular

The bad…

So, how are the results? Let’s start with the bad. The orthophoto is a mess. There’s actually probably very little technically wrong with the orthophoto: the stitching is good, the continuity is excellent, the variation between scenes non-existent, the visual distortions minimal. But, it’s a bad orthophoto in that between the high contrast between the trees and the snow compounded with the shadows from the low, nearly cloudless sky result in a difficult to read and noisy orthophoto. Bad data for an orthophoto in; bad orthophoto out.

The good

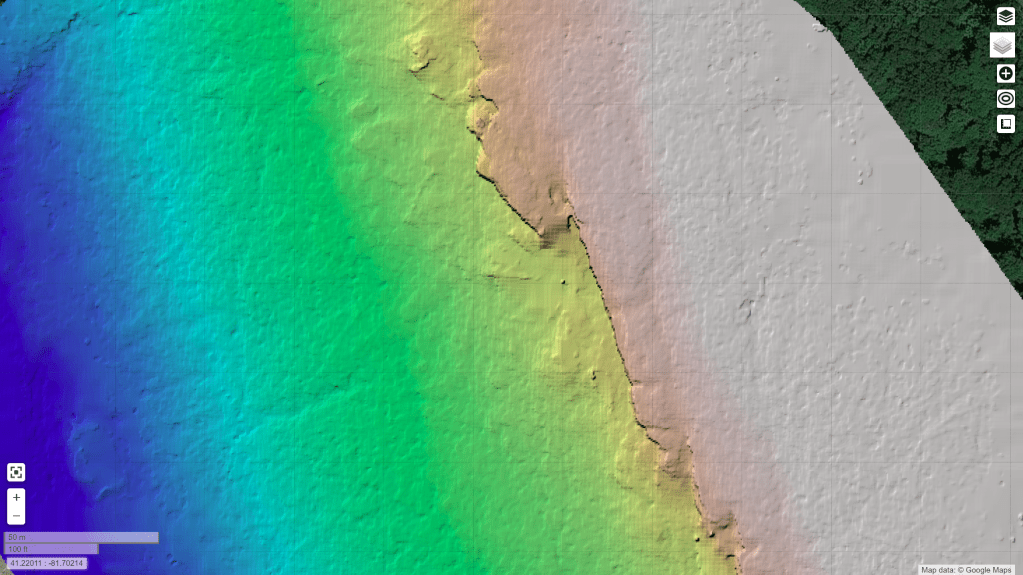

The orthophoto wasn’t our priority for these flights, however. We were aiming for good elevation models. How is our Digital Terrain Model (DTM)? It’s pretty good.

The DTM looks good on it’s own, and even compares quite favorably with a (admittedly dated, 2006) LiDAR dataset. It is crisp, shows the cliff features better than the LiDAR dataset, and represents the landform accurately:

The spectacular

So, if the ortho is bad and the DTM is good, what is great? The DSM is quite nice:

The DSM looks great. We get all the detail over the area of interest, each cliff face and boulder show up clearly in the escarpment.

Improvements in the next iteration

The digital surface model is really quite wonderful. In it we can see many of the major features of the formation, including named features like The Island, a clear delineation of the Main Wall and other features that don’t show in the existing terrain models.

Due to untuned filtering parameters, we filter out more of the features than we’d like in the terrain model itself. It would be nice to keep The Island and other smaller rocks that have separated from the primary escarpment. I expect that when we choose better parameters for deriving the terrain model from the surface model points, we can strike a good balance and get an even better terrain model.

Beating LiDAR at it’s own game

It is probably not fair to say we beat LiDAR at it’s own game. The LiDAR dataset we have to compare to is 13 years old, and a lot has improved in the intervening years. That said, with a $900 drone, free software, 35 minutes of flying, and two batteries, we reconstructed a better terrain model for this area than the professional version of 2006.

And we have control over all the final products. LiDAR filtering tends to remove features like this regardless of point density, because The Island and similar formations are difficult to distinguish in an automated fashion from buildings. Tune the model for one, and you remove the other.

For our use case, however, we can use the best parameters for this area, take a high touch approach, and create a really nice map of a special area in our parks for very low cost. High touch/low cost. I can’t think of a sweeter spot to reach.

One thought on “Reconstructing cliffs in OpenDroneMap, or how to beat LiDAR at its own game”