Bad puns are a currency in my household these days. Sorry/not sorry.

Artificial Intelligence, whether machine learning or deep learning, have developed a lot of cachet of late. Some of it is deserved, some is overblown. We are somewhere interesting on the hype curve. As a remote sensing person from way back, I can’t ignore the work in this field, but I also need to get my job done. Some projects in this field in the last few years have burned more personnel time and fossil fuel than I have bandwidth for: so I have been in a watch and wait period. Also, I have been in a get off my lawn period. “You think unsupervised classification is special? I was doing that in back in 2001, young whipper snapper! We didn’t even have hardware back then. We counted the pixels on our fingers! Dammit, when I walked to school, it was the Mahalanobis distance — both ways!”

A couple of years ago I stumbled onto fast.ai, whose motto is:

Making neural nets uncool again

Lol. If that doesn’t emit a Get off my lawn vibe, I don’t know what does. But they mean it — there is small army’s worth of resources for making the state of the art in AI accessible. They genuinely want to see domain experts become AI experts and create new great things. Combine that with any number of other online resources for the area, and you’ve got a good cookbook and culinary school for doing AI. So I started to dive into their materials, but I didn’t get far because I:

- Didn’t want to buy a computer with a graphics card

- Really didn’t want to rent a computer with a graphics card.

I’ve lost a fair amount of money renting computers on the internet, and predicting that amount poorly. I really didn’t want to repeat that in the name of deep learning. My learning about compute prices seemed deep enough.

I also didn’t have a small enough use case. Something achievable, satisfying. Something that would wet the appetite, but also not be my typical (over) work.

Enter Coffee + AI

Let’s be honest: espresso + AI is exactly the sort of thing that would elicit a get off my lawn response from me. Self speaking to myself or any other yahoo pitching this idea: “What are trying to do? Are you just trying to get funding for an idea by adding AI to it?” Maybe the proposed combination is eliciting just such a response from you? Are you thinking: this might just be pointless, vain, and an abuse of software?

Maybe.

But hear me out. What if we could automatically read the simple little gauge below, and use those readings to make better espresso? What if by reading this little gauge, more of us could discover affordable, communicable, and replicable ways to create better espresso?

Background

Lots of cool tech is going into espresso these days, and so much of it surrounds how to better extract better espresso. The knobs to twist here include: temperature, pressure, flow rate, extraction rate and a whole host of interacting elements. Its a wonderful complicated, beautiful craft, and its cool to see people thinking about ways to make it better.

For example, we have the Decent Espresso maker, which let’s you plot, control, and replicate temperature, pressure, flow rate, and weight from a single android tablet:

I am assured by a guy with a posh accent and hair product for sale in his YouTube channel that at $2000-$2800, this is quite an affordable solution for what it does. Which, if I had that kind of expendable income, I would probably be looking into. I have learned much from James about coffee. But I recently shaved my head, so neither a $2800 espresso maker nor hair product is not in the cards in the short or mid-term.

And then there is Naked Portafilter’s Smart Espresso Profiler, a $410 addition to lever espresso machines, which allows you to replicate shots very similarly on any lever machine, with a small piece of hardware and a smartphone app. One of the promises here is you can replicate that fantastic shot on that antique / unique / or expensive espresso machine, with just a little measurement along the way. What a time to be a hobbyist or professional in this industry.

But, can we do something with just what we have: a Flair Espresso maker, a computer vision algorithm of some sort, and a little thoughtfulness? Maybe.

Deeper Gauges

Digital gauges are pretty expensive. Your typical pressure gauge might be 12 bucks. Much less wholesale. Your digital version might fetch 10x or more that. The fact that Naked Portafilter was able to put something together at the $400 price point and with great looking software is pretty cool.

But if we want to go deeper with gauges, we need to understand our options with respect to deep learning approaches. Common deep learning approaches focus on classification: is there a dog, cat, grizzly bear, or teddy bear in this image and similar problems. This helps Google make your image collection more relevant to you, and up sell that data to advertisers and state actors trying to understand your motives better.

So I started by generating a bunch of labeled data: just synthetically generated gauges at different known pressure settings:

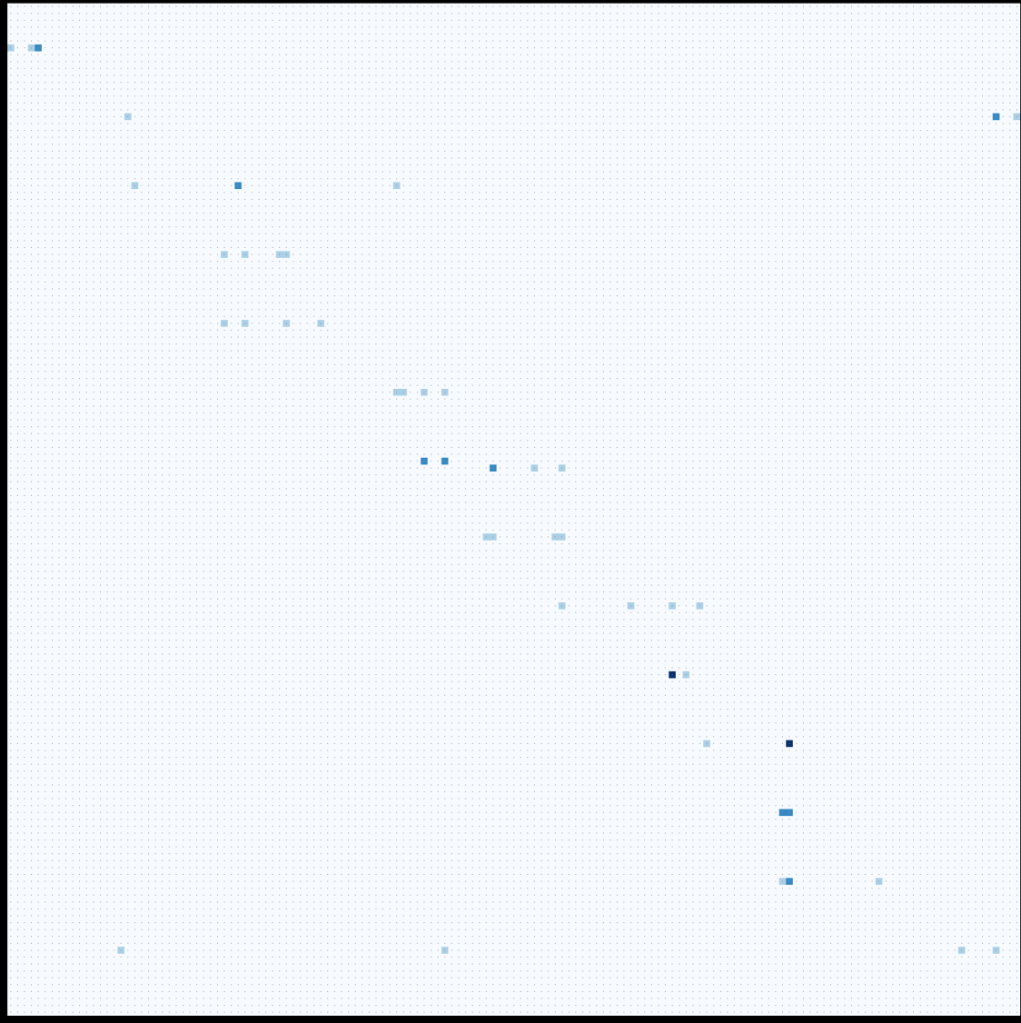

Not knowing what I was doing at first, I tried the easiest thing, which is to create a classification based on these data. I had a couple different gauge types to mix things up, and got some messy if promising results. The chart below, if it were nearly perfect would have a bunch of blue along the diagonal from top left to lower right. The classification worked ok, but it isn’t the end goal anyway. I just wanted to understand some basic mechanics of running fastai and getting my own data into it.

From class to regress

So, now we dig deeper. A regression is what we need in order to get ratio data (in this case decimal pressure readings) from the data, as well as a training loss and validation loss that make sense. To do this we simply tell fastai that label_cls=FloatList, change our loss function, and Robert is your aunties husband. More detail on this later.

Results

I think the model is pretty good: it is reading the gauges well, but I need to dig in deeper into understanding training loss and validation loss for my use case. That said, look at how well this is reading the dial! (Even better than it looks: I have some nasty bias in my training and validation dataset that will be fixed in the next round):

Have gauge reader, will travel

Now we have an algorithm that’s pretty ok at reading gauges! There’s still plenty of work to do, but we have a promising start to replacing some expensive hardware with an algorithm and some general purpose hardware. I am thinking, as is my tendency, that this should be an open hardware, open software solution, maybe ultimately hosted on CrowdSupply to bring it to life in a long-term, community-sustainable way. In the meantime, now that the caffeine has worn off, I suppose it’s time for bed.

Great work so far, really looking forward to seeing this in action with my FSP

Hi there, looks very interesting. Proud flair owner and would be interested to test it and see how it works. Let me know if you are looking for volunteer to test. Hope you will successfully get this done and make a huge noise in the flair community ^^. Cheers

Thanks! It should be pretty interesting.

I could also think of an alternative approach –

Creating a Gauge needle segmentation model. The model would predict the needle from the segmentation model, and then with some post-precessing calculate the angle made by the needle. And then use this angle to estimate the reading.