In a previous post on combining coffee and AI, a combo which makes me think of the kind of marketing genius that was Dinosaur Train (Dinosaurs!: But with trains!::Artificial Intelligence!:But with coffee!), we explored the application of deep learning models to coffee extraction. The underlying question, can we easily and quickly train an deep learning model to read a analog gauge so we can digitize our coffee extraction is a resounding yes!

Prior Art

There is some work in this space published at least 2 years ago here for generic reading of analog gauges: https://objectcomputing.com/resources/publications/sett/june-2019-using-machine-learning-to-read-analog-gauges which is pretty cool. Their github repo is here: https://github.com/oci-labs/deep-gauge/. Caveat emptor, there is no license on the repo, so we have to assume that the copyright is owned by Dr. Yang or Object Computing.

Also in prior art, not on the deep learning side of the house but also in pattern matching, and in the same coffee, computer vision domain, we have Zach Halvorson’s Espresso Vision. Check out the rest of his work at the intersection of engineering and coffee including:

Popcorn Air Popper Roaster– A home roaster was built and modified with a remote switch, an Arduino, an AC dimmer, and thermocouples.

Heat Gun + Flour Sifter Roaster – A second home roaster was built with a larger capacity and mechanically driven agitation.

Roast Profiling – A Yocto Thermocouple was purchased and installed in the hot air popcorn popper to monitor coffee bean temperature during roasting.

Roast Level Verification – A NIR spectrophotometer was employed to verify roast level and maintain consistency from batch to batch.

Espresso Vision – A computer vision algorithm was built for pressure profiling with a manual hand lever espresso maker – featured here

Cool stuff!

Approaches to generalizing models

A major challenge in training deep learning models is doing so in a way that generalizes well. Deep learning models have a propensity to not really know which features are important, and may focus on features that are coincident with important features, but not really relevant to discerning what we are trying to train the network to do. To this end, good modeling frameworks will do a bunch of transforms to the data, add noise, flip the data, and do other machinations to ensure that enough variations from the training data are introduced to help cross the generalization gap.

Now, these frameworks do a good job, but they tend to be two dimensional. In order to justify not using a simpler approach to our computer vision (there are many simpler ways to get the job done), and in order to justify building a model in blender, I began to think through how bad can the view of the pressure gauge be, and how good a job can we compensate with computer vision. To be quite honest, we can probably control the view enough with espresso makers to avoid these edge cases. Espresso Vision uses a cell phone selfie camera and a carefully oriented gauge in their project, which is a viable option. But maybe we want to generalize this approach to a variety of gauges and a variety of contexts.

Training our models with 3D data is kind of fun anyway, so let’s see what the inputs look like. BTW, another caveat emptor: the Flair Espresso logo is their trademark.

Toward a 3D Model

In order to create a 3D model of the gauge, I actually took a bunch of photos and reconstructed it in OpenDroneMap. Is anyone surprised? This was so I could get an unbiased set of proportions for the face. Since I didn’t use ground control, when OpenDroneMap tried to create an orthophoto of the face of the gauge it was off a little bit, so I loaded the 3D mesh in meshlab, switched to orthographic view, and took a screenshot.

After some heavy cleanup in Gimp, I created a pretty nice face to feed into the 3D renderer:

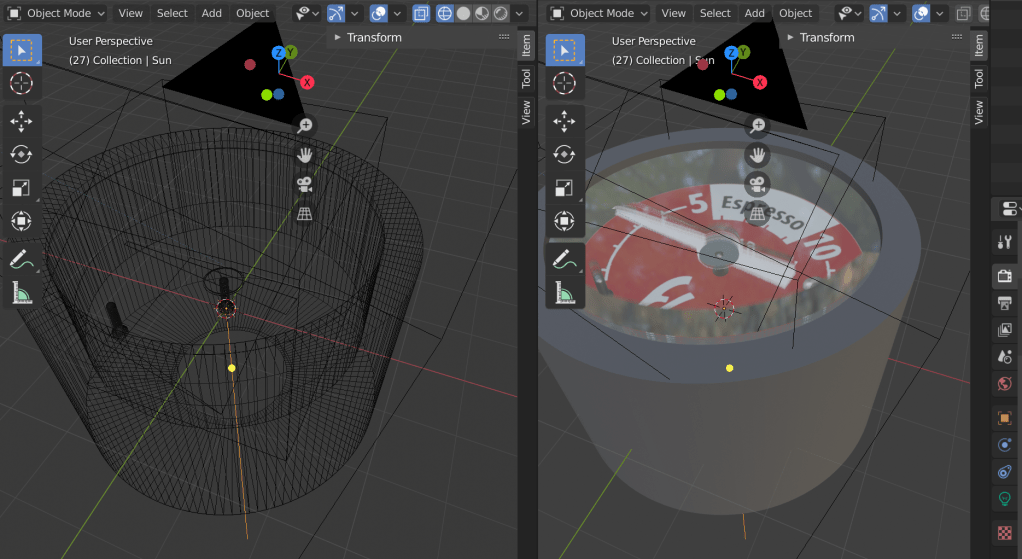

Taking measurements from the gauge itself, I created a quick 3D model:

And then animated it, with careful keyframing to get frames to correspond with known values. So frame 20 = 2.0 PSI, frame 111 = 11.1, etc.:

3D model building purists: please excuse my bad model. I thought UNION was a proper union with the dissolution of internal boundaries like it is in the geospatial world… .

Conclusion?

Finally, we have some training data for our deep learning model. The cool thing about this training data is that (I hope) it should be able to accurately read the gauge, even if viewed from a strange angle where the needle and gauge readings don’t align correctly due to perspective view. And it has some fun noise properties, like a glass cover with reflections. It should make for some interesting model training.

I haven’t trained the model yet, but will do that next, and we will see how well it does!

One thought on “Gauging the best way to combine Deep Learning and Coffee — Bean 2”