Project State

OpenDroneMap has really evolved since I first put together a concept project presented at FOSS4G Portland in 2014, and hacked with my first users (Michele M. Tobias & Alex Mandel). At this stage, we have a really nicely functioning tool that can take drone images and output high-quality geographic products. The project has 45 contributors, hundreds of users, and a really great community (special shout-out to Piero Toffanin and Dakota Benjamin without whom the project would be nowhere near as viable, active, or wonderful). Recent improvements can be roughly categorized into data quality improvements and usability improvements. Data quality improvements were aided by the inclusion better point cloud creation from OpenSfM and better texturing from mvs-texturing. Usability improvements have largely been in the development of WebODM as a great to use and easy-to-deploy front end for OpenDroneMap.

With momentum behind these two directions — improved usability and improved data output, it’s time to think a little about how we scale OpenDroneMap. It works great for individual flights (up to a few hundred images at a time), but a promise of open source projects is scalability. Regularly we get questions from the community about how they can run ODM on larger and larger datasets in a sustainable and elastic way. To answer these questions, let me outline where we are going.

Project Future

Incremental optimizations

When I stated that scalability is one of the promises of open source software. I mostly meant scaling up: if I need more computing resources with an open source project, I don’t have to purchase more software licenses, I just need to rent or buy more computing resources. But an important element to scalability is the per unit use of computing resources as well. If we are not efficient and thoughtful about how we use things on the small scale, then we are not maximizing our scaled up resources. Are we efficient in memory usage; is our matching algorithm as accurate as possible for the level of accuracy thus being efficient with the processor resources I have; etc.? I think of this as improving OpenDroneMap’s ability to efficiently digest data.

Incremental toolchain optimizations are thus part of this near future for OpenDroneMap (and by consequence OpenSfM, the underlying computer vision tools for OpenDroneMap), focusing on memory and processor resources. The additional benefit here is that small projects and small computing resources also benefit. For humanitarian and development contexts where compute and network resources are limiting, these incremental improvements are critical. Projects like American Red Cross’ Portable OpenStreetMap (POSM) will benefit from these improvements, as will anyone in the humanitarian and development communities that need efficient processing of drone imagery offline.

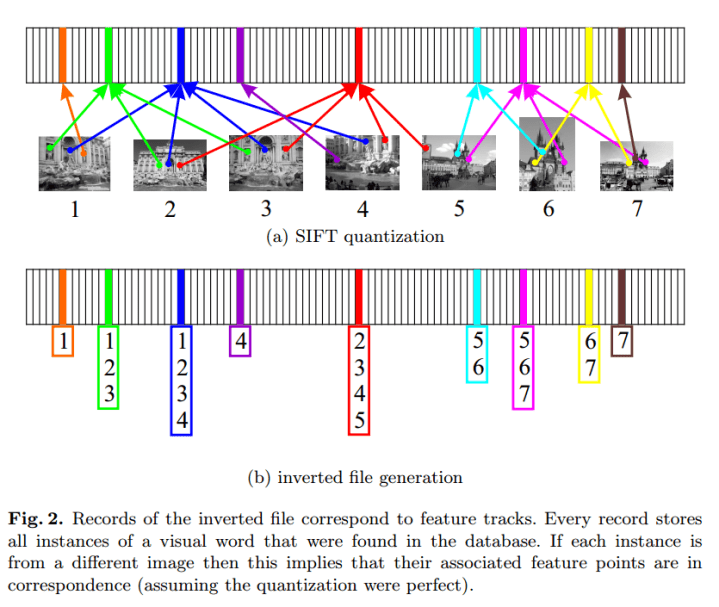

To this end, three approaches are being considered for incremental improvements. Matching speed could be improved by the use of Cascade Hashing matching or Bag of Words based method.Memory improvements could come via improved correspondence graph data structures and possibly SLAM-like pose-graph methods for global adjustment of camera positions in order to avoid global bundle adjustment.

Large-scale pipeline

In addition to incremental improvements, for massive datasets we need an approach to splitting up our dataset into manageable chunks. If incremental improvements help us better and more quickly process datasets, the large-scale pipeline is the teeth of this approach — we need to cut and chew up our large datasets into smaller chunks to digest.

If for a given node I can process 1000 images efficiently, but I have 80,000 images, I need a process that splits my dataset into 80 manageable chunks and processes through them sequentially or in parallel until done. Maybe I have 9000 images? Then I need it split into 9 chunks.

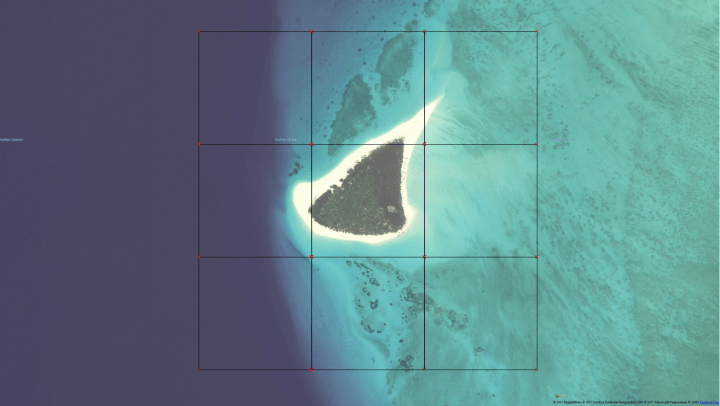

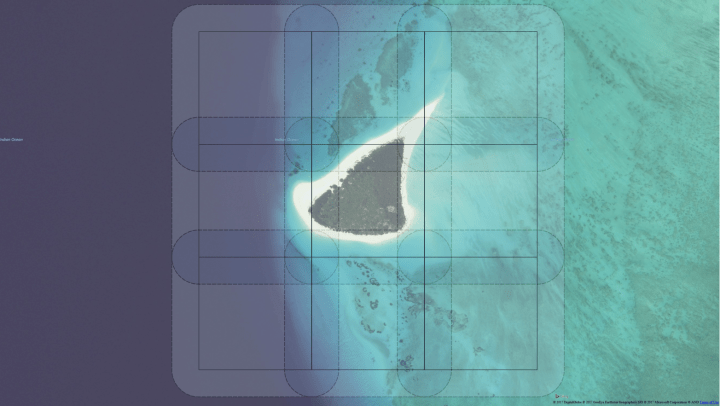

Eventually, I want to synthesize the outputs back into a single dataset. Ideally I split the dataset with some overlap as follows:

Problems with splitting SfM datasets

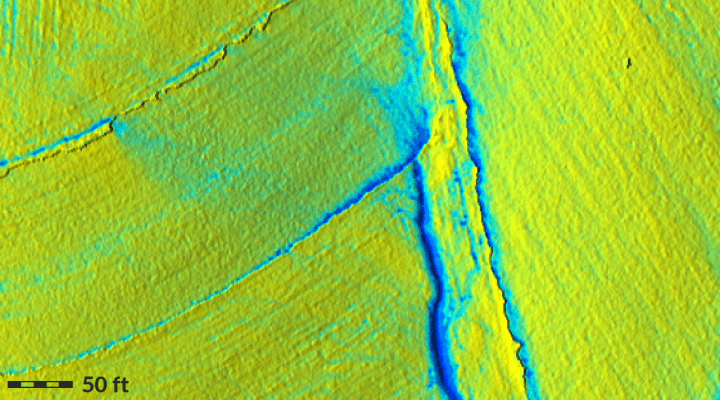

We do run into some very real problems with splitting our datasets into chunks for processing. There are a variety of issues, but the most stark is consistency issues from the resultant products. Quite often our X, Y, and Z values won’t match in the final reconstructions. This becomes critical when performing, e.g. hydrologic analyses on resultant Digital Terrain Models.

Anna Petrasova et al address merging disparate DEM’s in GRASS with Seamless fusion of high-resolution DEMs from multiple sources with r.patch.smooth.

What Anna describes and solves is the problem of matching LiDAR and drone data and assumes that the problems between the datasets are sufficiently small that smoothing the transition between the datasets is adequate. Unfortunately, when we process drone imagery in chunks, we can get translation, rotation, skewing, and a range of other differences that often cannot be accounted for when we’re processing the digital terrain model at the end.

What follows is a small video of a dataset split and processed in two chunks. Notice offsets, rotations, and other issues of mismatch in the X and Y dimensions, and especially Z.

When we see these differences in the resultant digital terrain model, the problem can be quite stark:

To address these issues we require both the approach that Anna proposes that fixes for and smooths out small differences, and a deeper approach specific to matching drone imagery datasets to address the larger problems.

Deeper approach to processing our bites of drone data

To ensure we are getting the most out of stitching these pieces of data back together at the end, we require using a very similar matching approach to what we use in the matching of images to each other. Our steps will be something like as follows:

- Split our images to groups

- Run reconstruction on each group

- Align and tranform those groups to each other using matching features between the groups

- For secondary products, like Digital Terrain Models, blend the outputs using an approach similar to r.patch.smooth.

In close

I hope you enjoyed a little update on some of the upcoming features for OpenDroneMap. In addition to the above, we’ll also be wrapping in reporting and robustness improvements. More on that soon, as that is another huge piece that will help the entire community of users.

(This post CC BY-SA 4.0 licensed)

(Shout out to Pau Gargallo Piracés of Mapillary for the technical aspects of this write up. He is not responsible for any of the mistakes, generalities, and distortions in the technical aspects. Those are all mine).

Hi! I tried to follow the “splits my dataset into 80 manageable chunks” strategy. I tried to group images into different tasks under the same project in WebODM. But the result I got has some mismatch at the borders of orthophotos from different chunks, even they actually use the same image files at those area.

Do you have any suggestions to improve? Thanks!

I uploaded the screenshots to the issue on the github repository of open drone map: https://github.com/OpenDroneMap/OpenDroneMap/issues/652, if you would like to see it.